Flaws and Scandal Surround Bot Development

Imagine a piece of technology you integrated into your home, that helps you with everyday information such as, what’s the weather like, how to change a plug socket or what’s the next step in the recipe you’re following, began laughing in the middle of the night without any interaction! Well, this is exactly what happened to many owners of Alexa.

Amazon had programmed the virtual assistant to deliver a “ha ha ha” in response to “Alexa, can you laugh?” However upon misinterpretation of audio, the device provided many owners with a fairly terrifying cackling that occurred spontaneously! Something we are sure of that consumers wouldn’t want to be woken up to at 3 o’clock in the morning! To fix this flaw, Amazon changed the response to “Sure I can laugh tee-hee”, but it does raise the question why would you want to ask Alexa this in the first place? Maybe this is something she couldn’t even answer herself.

Other assistants such as Siri and Google Home have also been programmed with cheeky answers, meaning to provide some light humour. However they tend to come across as just downright odd! Therefore is creeping consumers out really the best foundation for bots and virtual assistants?

Alternatively, There’s Facebook’s Method…

Facebook has recently had their own data privacy scandal where they leaked 50 million users’ data. It has since been reported that AI specialist at Google, François Chollet demands a boycott of Facebook tweeting “We’re looking at a powerful entity that builds fine-grained psychological profiles of over two billion humans, that run large-scale behaviour manipulation experiments, and that aims at developing the best AI the world has ever seen. Personally it really scares me.”

CNBC have commented that the “Scandals show that artificial intelligence is still not up to many critical jobs in the technology sector” as thousands of jobs are being added that “AI can’t handle” which includes security.

How to Keep Consumers Safe and Happy

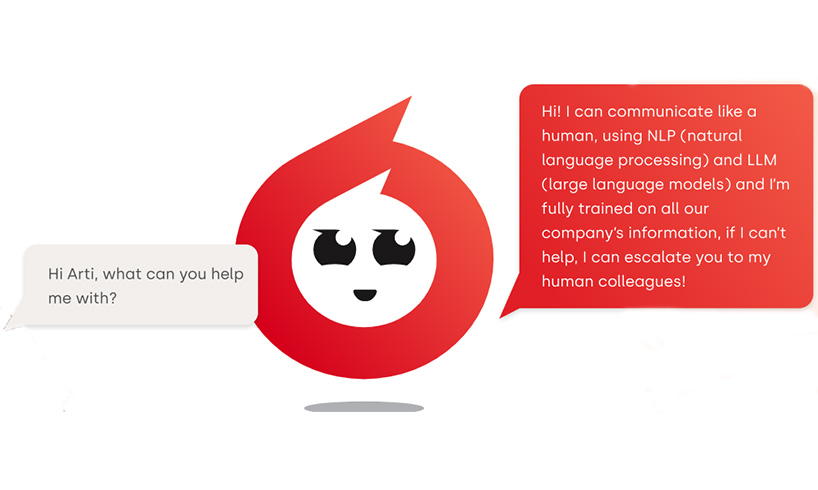

Firstly, by having human representatives helping with consumer enquiries via live chat will ensure the odd eventualities are kept to a minimum and that the customer is not cackled at! This warrants that that during any situation, whether of a sensitive nature or not, the most appropriate response is provided. Could you imagine if a chatbot started laughing at a visitor trying to enquiry with the police or a charity?

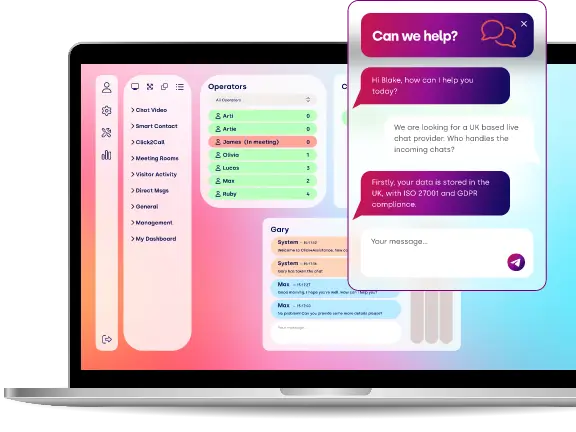

The second is to use suppliers that ensure your company’s and your consumers’ data is processed securely and in line with data protection regulations. Data that is transferred via the Click4Assistance chat box for website solution is encrypted at rest and in transit guaranteeing that the highest levels of encryption are used, and are the same secureness that consumers would expect from a payment page.

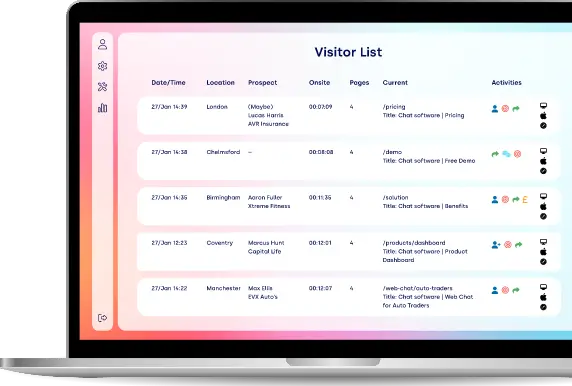

All changes made within the software by users are fully audited and every chat interaction can be viewed in real-time and stored once completed. This ensures that managers can analyse representative’s actions and confirm they are providing the service as expected.

Click4Assistance is the UK leading provider of live chat box for website software and has been supplying communication tools to businesses for over 10 years. We believe that automation has some part to play in customer service to aid representatives provide quicker response rates, but AI and chatbots are too immature to advise consumers. For more information on the Click4Assistance solution contact our team by calling 01268 524628 or email theteam@click4assistance.co.uk.

Sources:

Venture Beat, 2018, Alexa’s laughing bouts highlight a fatal flaw in bot development

The Times, 2018, AI chief demands boycott of Facebook

CNBC, 2018, Facebook data privacy scandal has one silver lining: Thousands of new jobs AI can’t handle